storm:分布式实时分析计算系统

storm概念

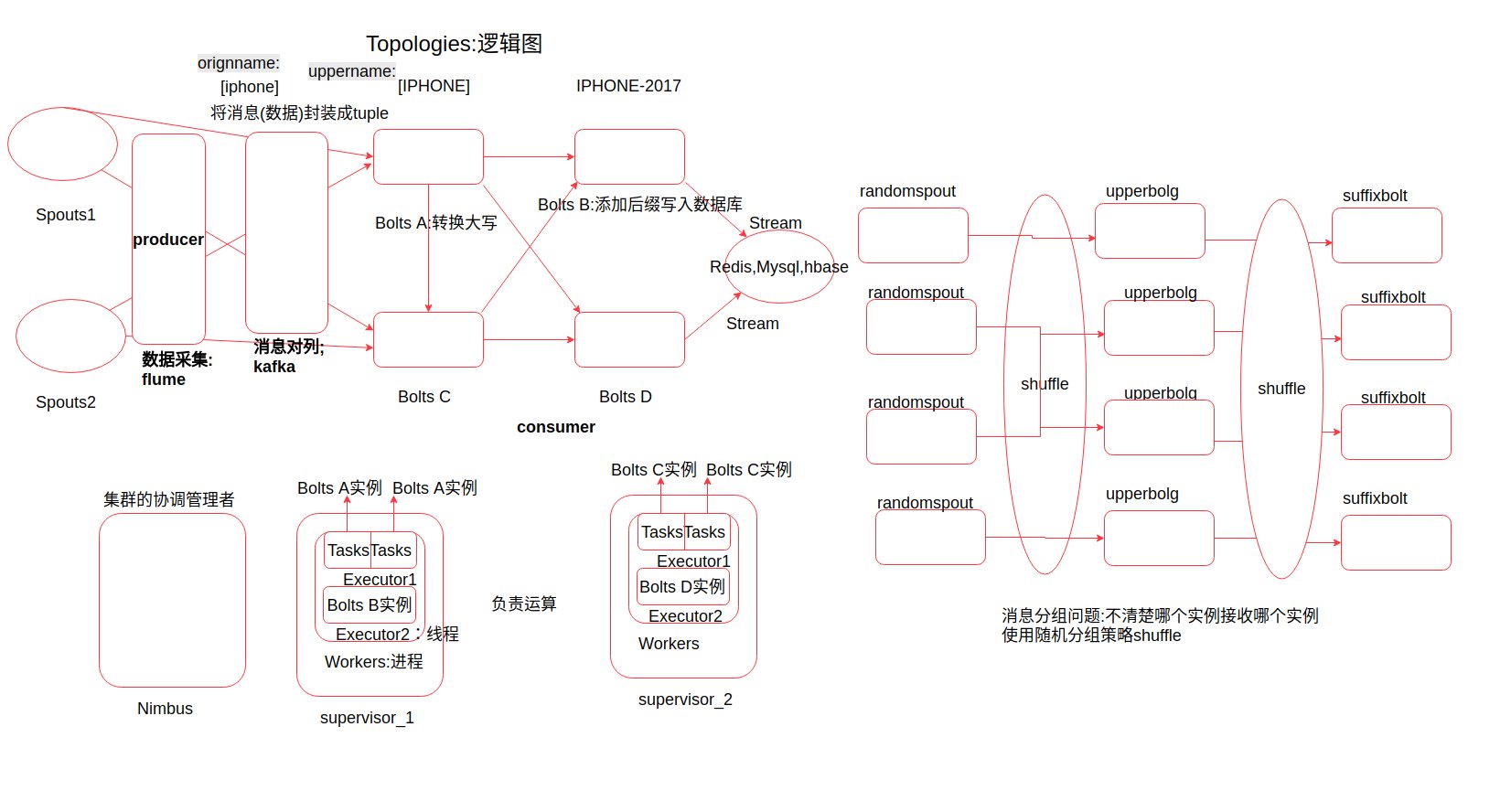

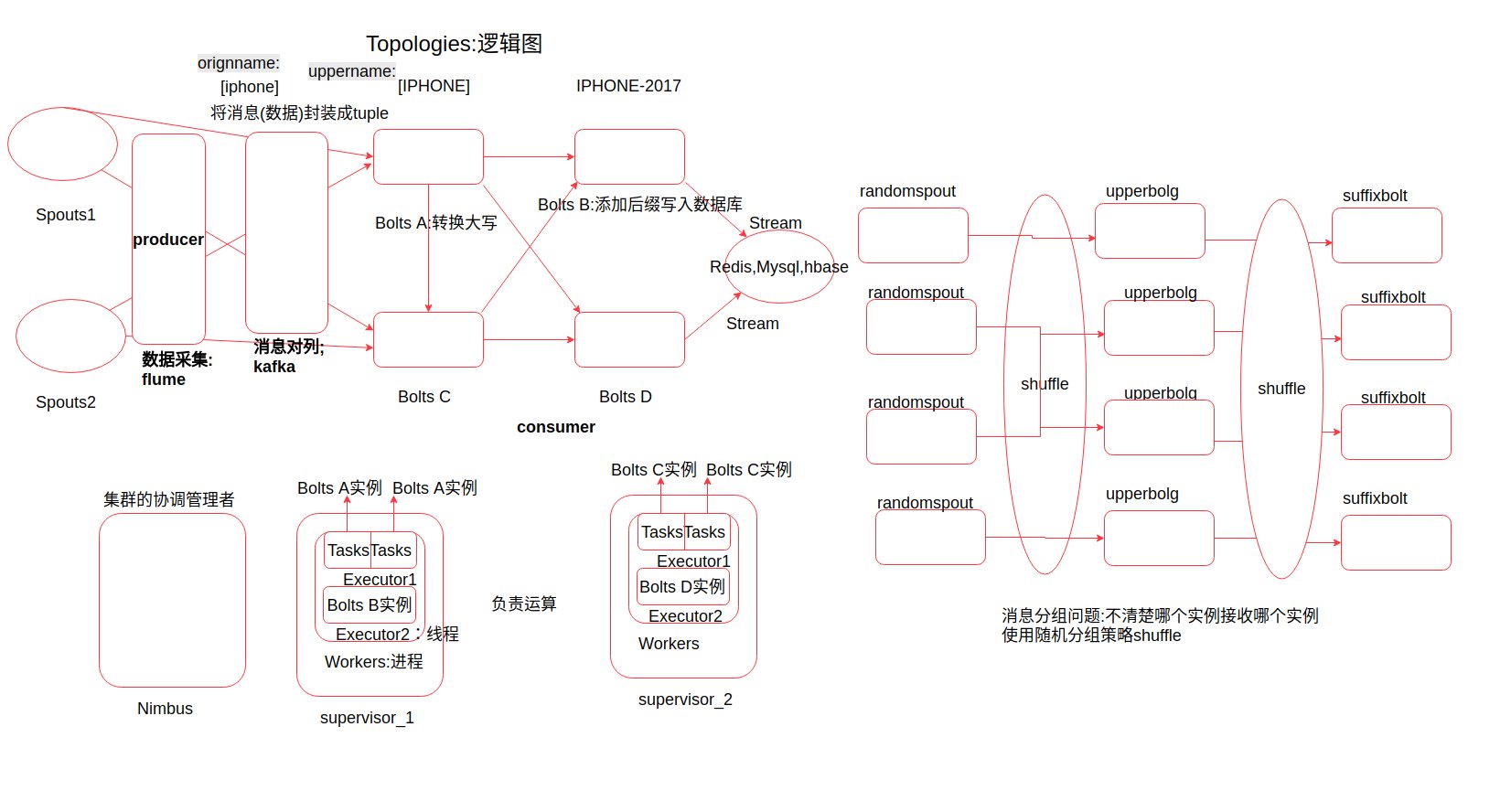

Topologies:拓扑,也称为一个任务

Spouts:集群i(拓扑)的消息源

Bolts:集群(拓扑)节点的处理逻辑单元

Configuration:topology配置

tuple:消息元组(在Spouts和Bolts之间传递的数据格式,一种自定义格式的封装)

Stream:流,tuple(消息的处理)经过的路径不一样

Stream groupings:流的分组策略

Tasks:任务处理单元

Executor:工作线程

Workers:工作进程

搭建storm集群

先在cluster1-3中安装zookeeper集群

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28

| tar -zcvf z.tar.gz zookeeper-3.4.10 rm -rf /root/zookeeper-3.4.10 tar -zxvf zookeeper-3.4.10.tar.gz cd zookeeper-3.4.10/conf/ mv zoo_sample.cfg zoo.cfg vim zoo.cfg dataDir=/root/zookeeper-3.4.10/data server.1=cluster1:2888:3888 server.2=cluster2:2888:3888 server.3=cluster3:2888:3888 cd ../ && mkdir data && cd data echo 1 > myid cd scp -r ./zookeeper-3.4.10 root@cluster2:/root scp -r ./zookeeper-3.4.10 root@cluster3:/root echo 2 > /root/zookeeper-3.4.10/data/myid echo 3 > /root/zookeeper-3.4.10/data/myid zkServer.sh start

|

复制安装包

1

| scp ./apache-storm-1.1.1.tar.gz root@cluster1:/root/

|

cluster1在运行

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19

| tar -zxvf apache-storm-1.1.1.tar.gz mv apache-storm-1.1.1 storm cd storm/conf vim storm.yaml storm.zookeeper.servers: - "cluster1" - "cluster2" - "cluster3" nimbus.seeds: ["cluster1"] storm.zookeeper.root: "/root/zookeeper-3.4.10" cd scp -r storm root@cluster2:/root/ scp -r storm root@cluster3:/root/ echo "export PATH=\${PATH}:/root/storm/bin" >> ~/.bashrc source ~/.bashrc

|

在nimbus主机上运行(cluster1)

1 2

| nohup storm nimbus 1>/dev/null 2>&1 & nohup storm ui 1>/dev/null 2>&1 &

|

访问 http://cluster1:8080/

在supervisor主机上运行(cluster2-3),等nimbus跑起来之后 加一个就让HMaster管理

1

| nohup storm supervisor 1>/dev/null 2>&1 &

|