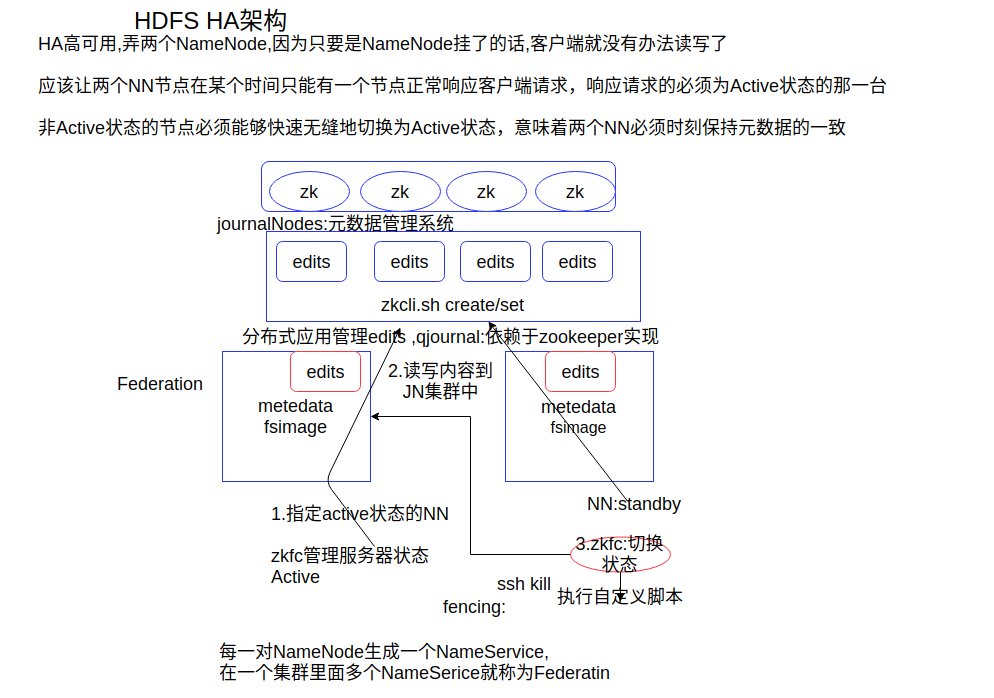

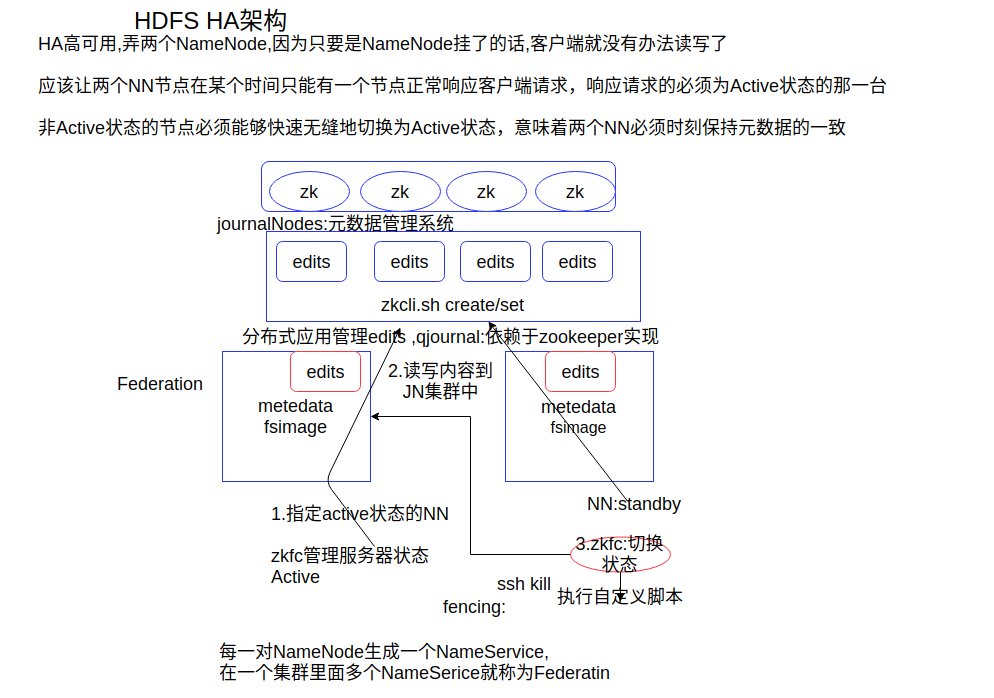

HDFS元数据的可靠性有保证,但hadoop的HA(高可用)不高

HDFS HA高可用的集群搭建

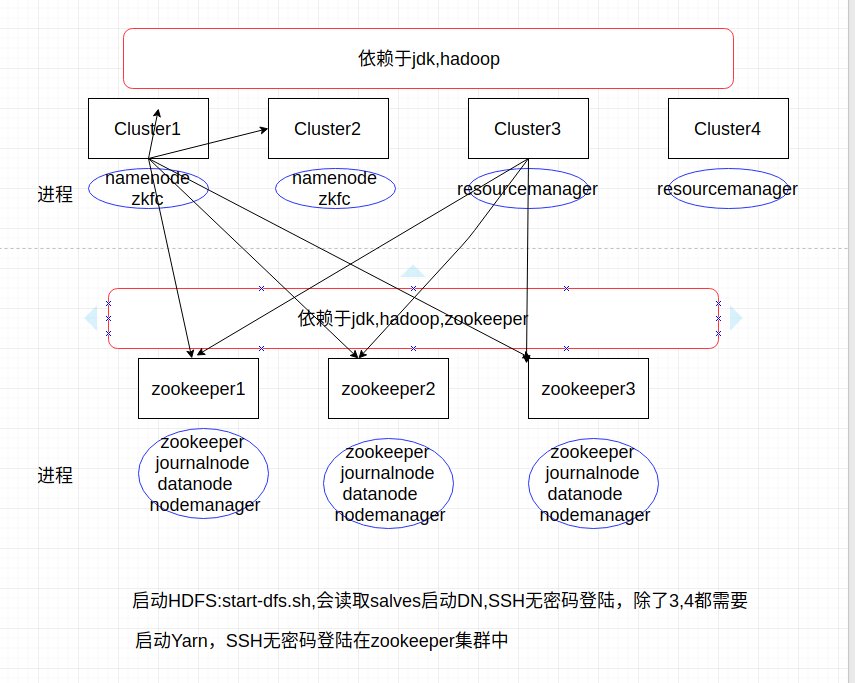

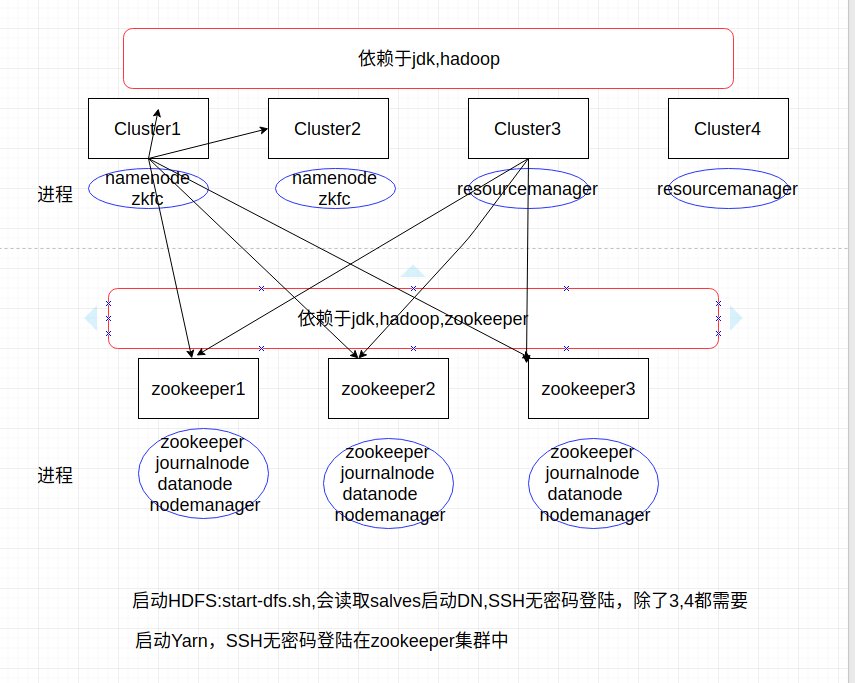

7台机器的集群规划(512M,8G)

cluster1 192.168.1.221 jdk,hadoop namenode zkfc

cluster2 192.168.1.222 jdk,hadoop namenode zkfc

cluster3 192.168.1.223 jdk,hadoop resourcemanager

cluster4 192.168.1.224 jdk,hadoop resourcemanager

zookeeper1 192.168.1.225 jdk,hadoop,zookeeper zookeeper journalnode datanode nodemanager

zookeeper2 192.168.1.226 jdk,hadoop,zookeeper zookeeper journalnode datanode nodemanager

zookeeper3 192.168.1.227 jdk,hadoop,zookeeper zookeeper journalnode datanode nodemanager

实际操作(之后的所有操作都是root用户)

前提

- 修改了网卡设置,网络正常.

- 在/root目录下有hadoop-2.8.1.tar.gz,jdk8.tar.gz和zookeeper-3.4.10.tar.gz这三个包

在一台虚拟机上执行如下脚本(cluster1)

1

| curl https://file.femnyy.com/file/install_hadoop_cluster.sh | sudo sh

|

然后就clone 6台虚拟机,进入开启这6台虚拟机,修改IP,重新启动网络

1 2 3 4

| vim /etc/sysconfig/network-scripts/ifcfg-enp0s3 vim /etc/sysconfig/network-scripts/ifcfg-enp0s8 service network restart ifconfig

|

然后在cluster1中执行SSH免密码登陆,分别对列出的主机执行

1

| ssh-copy-id cluster1 cluster2 zookeeper1 zookeeper2 zookeeper3

|

在cluster3中执行如下命令,分别对列出的主机执行

1

| ssh-copy-id zookeeper1 zookeeper2 zookeeper3

|

在zookeeper2在执行如下命令

1

| echo 2 > /root/zookeeper-3.4.10/data/myid

|

在zookeeper3在执行如下命令

1

| echo 3 > /root/zookeeper-3.4.10/data/myid

|

环境配置好了之后,一定严格按下面步骤进行操作

启动zookeeper集群(分别在zookeeper1-3上启动zk)

1 2 3

| zkServer.sh start zkServer.sh status

|

启动journalnode(分别在zookeeper1-3上执行)

1 2 3

| hadoop-daemon.sh start journalnode jps

|

格式化HDFS(在cluster1上执行)

1 2 3 4 5

| hdfs namenode -format scp -r /root/hadoop-2.8.1/tmp/ root@cluster2:/root/hadoop-2.8.1/

|

格式化ZKFC(在cluster1上执行即可)

1 2 3 4 5 6

| hdfs zkfc -formatZK zkCli.sh ls / ls /hadoop-ha get /hadoop-ha/ns1

|

如果改了配置文本想重新启动就不需要再启动上面的内容了,

比如改了所有虚拟机的hdfs-site.xml文件,只需要在cluster1 stop-dfs.sh 再运行start-dfs.sh就可以了

如是下次重新启动时就启动zk集群和start-dfs.sh和start-yarn.sh,格式化一次就可以了

启动HDFS(在cluster1上执行即可)

启动YARN(在cluster3上执行)

将Namenode和Resourcemanager分开是因为性能问题

启动YARN daemon(在cluster4上执行)

1

| yarn-daemon.sh start resourcemanager

|

访问

HDFS: http://cluster1:50070

HDFS: http://cluster2:50070

YARN: http://cluster3:8088

改进: dfs.datanode.http.address datanode的HTTP服务器和端口 50075hdfs-site.xml 0.0.0.0:50075

HDFS HA 测试

测试上传

在zookeeper1-3集群中在执行

1

| cd /root/hadoop-2.8.1/tmp/dfs/data/current/BP-xx/current/finalized/

|

在cluster1在执行

1 2 3 4

| hadoop fs -put /root/hadoop-2.8.1.tar.gz / mkdir 1 && cd 1 hadoop fs -get /hadoop-2.8.1.tar.gz

|

测试namenode切换

HDFS: http://cluster2:50070

HDFS: http://cluster2:50070

得知哪个是active状态,我的是cluster2是active

通过jps得到namenode的进程,将其kill

kill cluster2 namenode 进程

1 2 3

| hadoop-daemon.sh start namenode

|

将cluster1这个虚拟机关机

现在是cluster1为active,,cluster2至少要等30s才能切换.

然后重新启动cluster1之后执行

1 2

| hadoop-daemon.sh start zkfc hadoop-daemon.sh start namenode

|

手动切换,但不推荐使用

现在cluster2是active,在cluster1中执行

1 2 3

| hdfs haadmin -transitionToStandby nn2 --forcemanual hdfs haadmin -getServiceState nn2

|

查看Yarn状态

在zookeeper1-3的随便一台在执行

1 2 3

| zkCli.sh get /yarn-leader-election/yrc/ActiveBreadCrumb

|

也可以通过访问:

YARN:http://cluster3:8088

YARN:http://cluster4:8088

会自动跳转到运行状态的地址上

Yarn的HA测试

我的是cluster3是active,在cluster4在执行

1

| hadoop jar hadoop-2.8.1/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.8.1.jar pi 5 5

|

在cluster3在杀死ResourceManager进程

1 2

| yarn-daemon.sh start resourcemanager

|

Yarn的HA不像HDFS的YA一样,YARN正在跑的程序突然中断则意味着程序的失败

动态增加DataNode节点和数量管理

在zookeeper3中kill掉datanode进程

再查看http://cluster1:50070的Live Nodes数量,则会显示Live NOdes:2,Dead NOdes:1,block副本不会变多

1 2 3

| vim /root/hadoop-2.8.1/tmp/dfs/data/current/VERSION

|

新加一个datanode节点,只需要hadoop包,然后启动datanode就行了

clone一台zookeeper1的名为zookeeper4,并在zookeeper4运行

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34

| rm -rf /root/hadoop-2.8.1/tmp echo "192.168.1.228 zookeeper4">> /etc/hosts echo "zookeeper4">> /root/hadoop-2.8.1/etc/hadoop/slaves scp /etc/hosts root@cluster1:/etc/hosts scp /etc/hosts root@cluster2:/etc/hosts scp /etc/hosts root@cluster3:/etc/hosts scp /etc/hosts root@cluster4:/etc/hosts scp /etc/hosts root@zookeeper1:/etc/hosts scp /etc/hosts root@zookeeper2:/etc/hosts scp /etc/hosts root@zookeeper3:/etc/hosts scp /root/hadoop-2.8.1/etc/hadoop/slaves \ root@cluster1:/root/hadoop-2.8.1/etc/hadoop/slaves scp /root/hadoop-2.8.1/etc/hadoop/slaves \ root@cluster2:/root/hadoop-2.8.1/etc/hadoop/slaves scp /root/hadoop-2.8.1/etc/hadoop/slaves \ root@cluster3:/root/hadoop-2.8.1/etc/hadoop/slaves scp /root/hadoop-2.8.1/etc/hadoop/slaves \ root@cluster4:/root/hadoop-2.8.1/etc/hadoop/slaves scp /root/hadoop-2.8.1/etc/hadoop/slaves \ root@zookeeper1:/root/hadoop-2.8.1/etc/hadoop/slaves scp /root/hadoop-2.8.1/etc/hadoop/slaves \ root@zookeeper2:/root/hadoop-2.8.1/etc/hadoop/slaves scp /root/hadoop-2.8.1/etc/hadoop/slaves \ root@zookeeper3:/root/hadoop-2.8.1/etc/hadoop/slaves hadoop-daemon.sh start datanode ll /root/hadoop-2.8.1/tmp/dfs/data/current/BP-xx\ /current/finalized/subdir0/subdir0

|

现在再将zookeeper3的datanode启动起来

1

| hadoop-daemon.sh start datanode

|

目前将会有四个节点,四个副本,导致了数据的冗余,hadoop会自动的清除掉冗余的数据