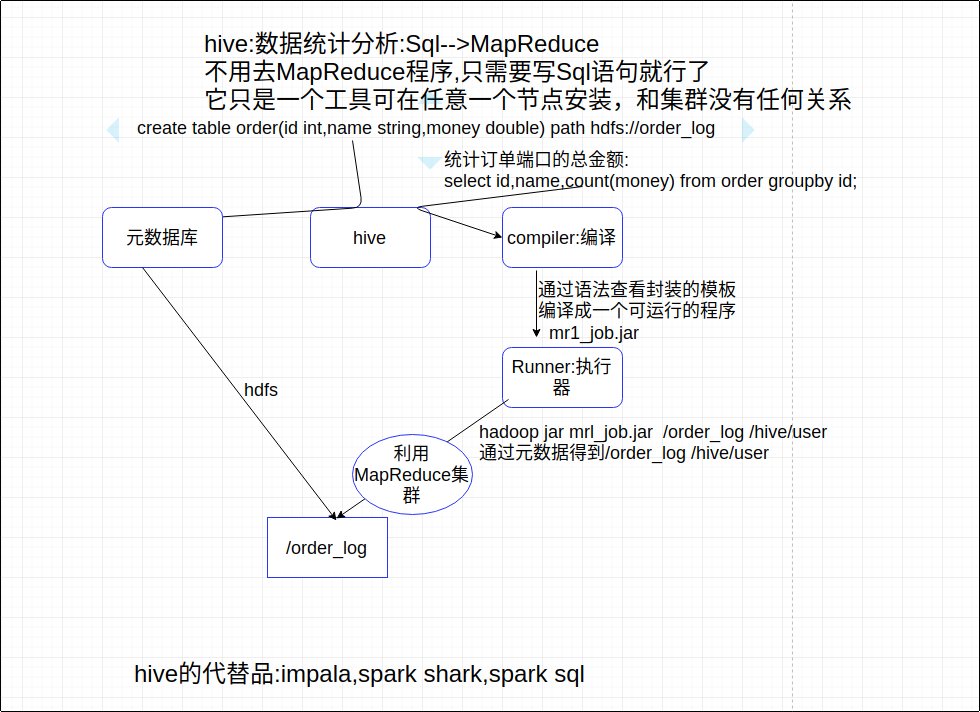

hadoop工具(一):hive:它只是一个工具可在任意一个节点安装,和集群没有任何关系,.

安装使用hive(cluster1)

install mysql

|

|

install hive

scp ./apache-hive-2.1.1-bin.tar.gz root@cluster1:/root

|

|

hive/lib目录下放mysql.jar

|

|

配置环境变量

|

|

启动hive,建立在集群之上

|

|

创建一些数据123456vim /root/d.data1 iphone8 64G 80002 iphone7 64G 70003 iphone6 64G 60004 iphone5 64G 50005 iphone4 64G 4000

hive语法

|

|

通过mysql查看hive上传的时的表的信息

|

|

用hive创建数据库或表就相当于在hdfs中创建目录